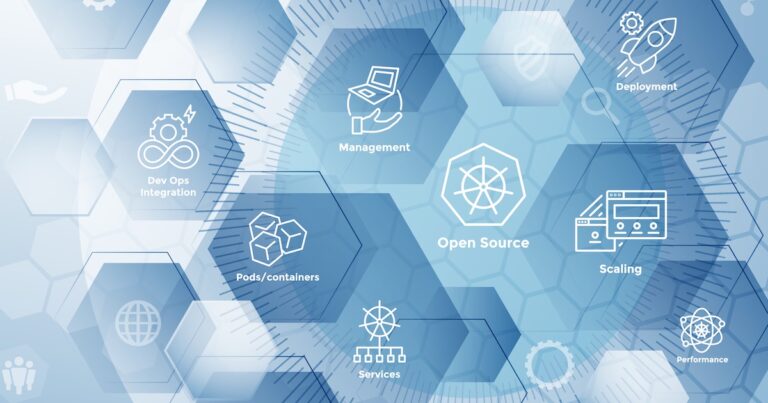

Microservices, containers, and cloud-native services have experienced an upsurge because of Kubernetes’ success. K8S provides comprehensive assistance to develop and deploy large applications in chunks, scale out and scale in, observability of every deployed component and much more.

It also makes the Kubernetes architecture heavily built and complex to manage so that developers make many common mistakes while working with K8s. Hence, understanding the basic architecture of Kubernetes and how it works is essential to effectively utilize all the benefits Kubernetes offers.

What is Kubernetes architecture?

Kubernetes is a container orchestration platform. Kubernetes architecture consists of a master and multiple nodes or worker nodes. It manages multiple containers with a set of APIs in a cluster and uses command line tooling to automate containerized applications’ deployment, scaling, and management.

Let’s explore 10 common Kubernetes mistakes, how they usually happen, and how you can fix them.

1. Ignoring Health Checks

To effectively manage containers, Kubernetes needs a way to check their health to see if they are working properly and receiving traffic. Kubernetes uses health checks—also known as probes—to determine whether instances of your app are running and responsive. To check the health of a container at different stages of its lifecycle, Kubernetes uses a variety of probes; liveness, readiness, and startup.

Liveness Check allows Kubernetes to check whether your app is alive or not. The Kubelet agent running on each node uses liveness probes to ensure that the containers are running as expected. Readiness checks run during the entire lifecycle of the container. Kubernetes uses this probe to know when the container is ready to accept traffic. The startup probe determines when the container application has been started successfully. If the startup check fails, the pod is restarted.

2. Using The ‘Latest’ Tag

Latest is not descriptive and hard to work with. The Kubernetes docs are very clear on using docker images:latest tags in a production environment:

You should avoid using the :latest tag when deploying containers in production, as it makes it difficult to track which version of the image is running and hard to roll back.

If you’re working with an image tagged with “latest,” you have all that information other than the image ID. If you don’t have two differently tagged images that are static, operations like deploying or rolling back a new version of your app are not possible. No tool can make it pleasant to deploy “new:latest image” instead of “old:latest image”.

3. Deploying A Service To The Wrong Kubernetes Node

Kubernetes is a complex system with master and worker nodes handling different requirements of the applications and maintaining a state of it while working together. The Kubernetes master nodes run the Scheduler, Controller Manager, API Server, and etcd components and are responsible for managing the Kubernetes cluster. Essentially, it’s the brain of the cluster! On the other side, worker nodes use kubelet, kube-proxy, and a certain container runtime (such as Docker, container) running on each node and are used to run user-defined containers. Worker nodes are managed by Kubernetes control plane.

The most common mistake beginners make is deploying a container to the wrong node. If you deploy your service on the wrong node, it may not work properly – or at all! Also, your new containers will take longer than expected because they have to wait for the available scheduler to be assigned tasks before starting anything.

To avoid this, you should always know what kind of node your services are running on – master or worker – before deploying them. You should also check whether the pod has access to other pods in the cluster that need to communicate before launching any containers.

4. Not Employing Deployment Models

Kubernetes deployment strategy helps meet application development needs and allows to achieve the desired state of the application faster. Technically, the method uses declarative updates for Pods and ReplicaSets. There are usually five types of Kubernetes deployment methods available, among three that are highly popular: blue-green, canary, and rolling.

In the blue/green deployment model, you use two production environments, as identical as possible. Then you gradually move user traffic from the previous version of the app to the almost identical new release—both running in production. Here the old version is considered blue and the new version green. Therefore, all traffic is first sent blue by default, and if the latest version (green) meets all the requirements, the old version traffic is changed to the new version (blue to green).

Canary deployment is a more managed and controlled method of deploying applications. It facilitates rolling out releases to a subset of users or servers. The idea behind a Canary deployment is to first deploy the change to a small subset of servers, test it, and then roll it out to the rest of the servers.

A rolling deployment gradually replaces previous versions of an application with newer versions, completely replacing the infrastructure on which the application is running. It is the slowest process among the three; however, unlike Blue/Green deployments, there is no environment separation between the old and new application versions in a rolling deployment.

Kubernetes offers several deployment strategies to meet a wide range of application development and deployment needs. Revise your knowledge of Kubernetes Deployment options to decide the best fit for your project requirements.

5. CrashLoopBackOff

If an error has ever popped up as CrashLoopBackOff in your Kubernetes CLI, it is because your pods were in a constant state of flux. It means one or more containers are failing and restarting repeatedly. There could be many reasons why a pod would fail into a CrashLoopBackOff state, including errors when deploying Kubernetes (deprecated docker versions), missing dependencies, errors in liveness probes, and changes caused by recent updates.

CrashLoopBackOff typically occurs as each pod inherits a default restart policy of Always upon creation. You can run the standard kubectl get pods command to see the status of any pod that is currently in CrashLoopBackOff.

There are tools available supporting Kubernetes native monitoring allowing users to view and graph CrashLoopBackoff events over time. Many tools could be integrated with communication channels like Slack and notify users in real-time. Users can also view the events, logs, and metrics before the pod crashes and resolve the error accordingly.

6. Resources — Requests and Limits

Beginners’ most common mistakes when working on Kubernetes are not setting requests and limits for CPU and memory usage. It makes many worker nodes ‘overcommitted,’ and applications become low-performing. On the other hand, if the limits are set too low, the CPU performs poorly and has an OOMkill error. Both the situation makes Kubernetes ineffective in deriving the desired experience.

The ideal strategy is to allocate the right amount of CPU and memory. One can also perform stress testing against their application to determine the limit at which the system or software breaks. Based on how the system responds in various situations, one can define resources for requests and limits to ensure uninterrupted service and performance.

7. Not Considering Monitoring And Logging Requirements

Kubernetes is a multi-layered solution. The entire deployment is a cluster, and there are nodes inside each cluster. Each node runs one or more pods, which are the core components that handle your containers, and nodes and pods are managed by the control plane. Inside the control plane, there are many small pieces like Kube-controller, cloud-controller, Kube-api-server, Kube-scheduler, etc. These abstractions all serve to help Kubernetes efficiently support your container deployments. Although they are all very useful, they generate many metrics.

And top of it, Kubernetes is used to deal with modern software development practices, and microservices are the biggest part. Microservices also require continuous monitoring to ensure their health keeps. Therefore, setting up a monitoring system and a log aggregation server before deploying your application on Kubernetes keeps tabs on the important metrics, so you can rest assured everything is working.

8. Overprivileged Containers

According to a security perspective, the most common mistake developers make while using Kubernetes is they give too many privileges to containers, such as giving capabilities of a host machine, allowing the ability to access resources that are not accessible in ordinary containers. An example of a privileged container could be running a Docker daemon inside a Docker container. Running privileged containers is not necessarily secure, as an unsecured container can allow cybercriminals to gain a backdoor into the enterprise’s system.

A quick solution to rescue you from the situation is to allow privilege escalation. Allow Privilege escalation allows a container to request more capabilities during runtime. There are more advanced ways as well. You must avoid giving CAP_SYS_ADMIN capability to your container as it comprises more than 25 privileged actions, like giving full privileges to the container. Another one is host file system privileges given to the container.

This means the container can be used to comprise the whole host by replacing binaries with malicious versions or messing up the Kubernetes or Docker configuration. Therefore, /etc/Kubernetes, /bin, /var/run, docker.sock, and alike shouldn’t be part of using the Kubernetes strategy.

9. Accidentally Exposing Internal Services To The Internet

Edge services are crucial for modern applications exposed to the internet and act as the gateway to all other services. It also raises concern among DevOps using Kubernetes. There are many ways these internal services could be accidentally exposed to the internet, potentially giving away access to sensitive data. These services require strong authentication and access control to be accessed from other trusted services only.

Therefore if you are using an additional external load balancer, ingress controller, node port, etc., make sure these are logged and validated. Moreover, first connections from public IPs to a workload should also be raised to ensure that accidental exposure does not occur. You can also use a network map to determine if any public services have direct access to sensitive resources, internal databases, or vaults.

10. Not Having Standard Operating Procedures Or Policies In Place

Kubernetes is powerful because it gives teams that may not have the experience to deploy applications securely to production control over infrastructure they didn’t have before. It has moved business-critical operations, such as security, to development teams.

Organizations moving to Kubernetes need to impose limits for development teams through configuration policies to avoid the mistakes mentioned earlier, as well as many others that we haven’t listed and ensure that the infrastructure is maintained after deployment has been marked for significant changes. These policies should protect the full development and deployment process without affecting development agility and market momentum.

Conclusion

Kubernetes service offers significant advantages for modern cloud-based applications, but organizations need a new approach to monitoring to fully reap its benefits. Kubernetes presents unique observational challenges, and traditional monitoring techniques are insufficient to gain insight into cluster health and resource allocation. By understanding the complexities behind Kubernetes monitoring, you can better identify a solution that will allow you to get the most value out of your Kubernetes deployment.